Texas A&M; Engineering Experiment Station and Texas A&M; AgriLife Research

Principal Investigator

1 January 2016 – 31 October 2016

Total award $106,000

Research

Our research is focused on bridging the scientific gaps between traditional computer science topics and aerospace engineering topics, while achieving a high degree of closure between theory and experiment. We focus on machine learning and multi-agent systems, intelligent autonomous control, nonlinear control theory, vision based navigation systems, fault tolerant adaptive control, and cockpit systems and displays. What sets our work apart is a unique systems approach and an ability to seamlessly integrate different disciplines such as dynamics & control, artificial intelligence, and bio-inspiration. Our body of work integrates these disciplines, creating a lasting impact on technical communities from smart materials to General Aviation flight safety to Unmanned Air Systems (UAS) to guidance, navigation & control theory. Our research has been funded by AFOSR, ARO, ONR, AFRL, ARL, AFC, NSF, NASA, FAA, and industry.

Autonomous and Nonlinear Control of Cyber-Physical Air, Space and Ground Systems

Vision Based Sensors and Navigation Systems

Cybersecurity for Air and Space Vehicles

Air Vehicle Control and Management

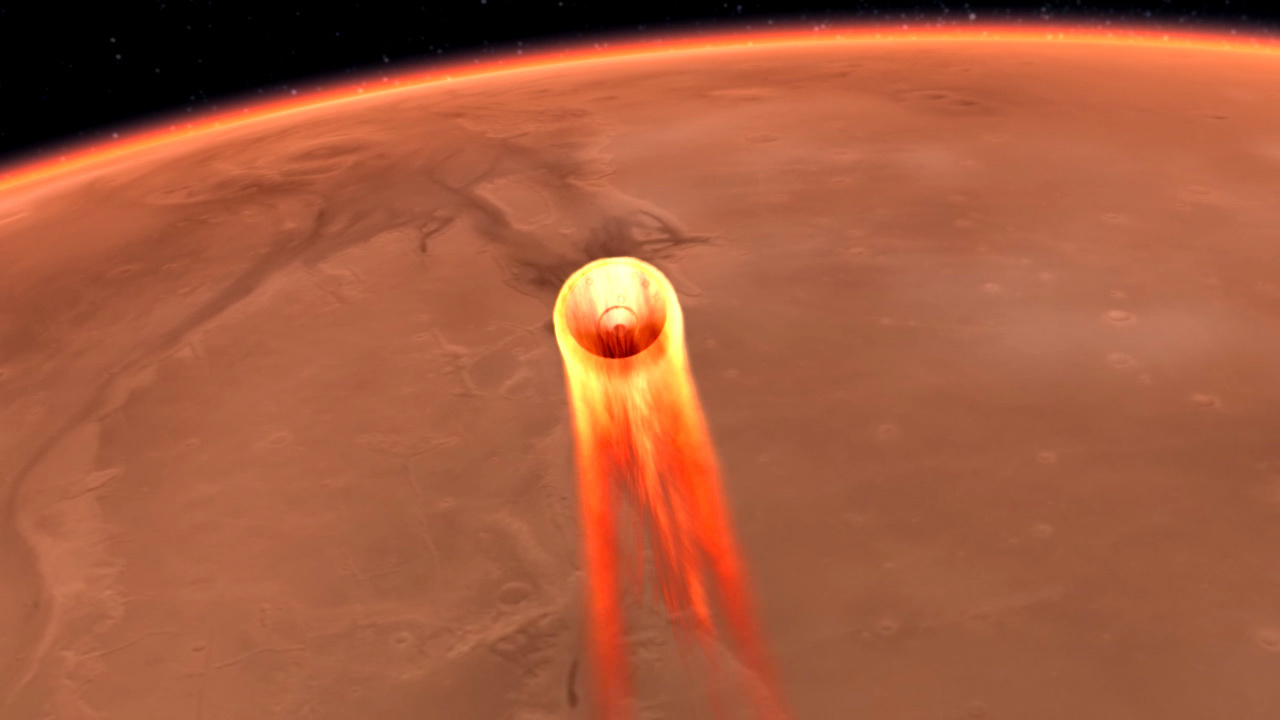

Space Vehicle Control and Management

Advanced Cockpit/UAS Systems and Displays

Control of Bio-Nano Materials and Structures

Utilization of Unmanned Aerial Systems (UAS) for Crop Production and Weed Management Applications

Texas A&M; AgriLife Research and Cropping Systems Program

Co-Principal Investigator

1 September 2015 – 31 August 2017

Total award $240,000

The overall goal of this project is to generate preliminary data necessary for effective utilization of UAS-based imaging techniques for crop production and weed management applications. UAS can be equipped to include multi-spectral sensors (3 to 4 bands in the visible and near-infrared/NIR range), hyperspectral sensors (Headwall’s Standard Micro-Hyperspec VNIR 380-1000 nm spectral range), thermal sensors and LIDAR (Light Detection And Ranging), among others. These sensors have a multitude of applications, in areas such as soil, crop, water and weed management in agriculture. However, interpretation of data collected using UAS-based remotely sensed images requires careful consideration of several factors. What is not known is the error estimates between UAS and ground-level data. Such knowledge is key to validate the utility of UAS-based data collection for various applications in agriculture. One of the major limitations so far is the labor-intensive ground data collection and biomass sampling, which will be addressed in this research. We believe that layering UAS data with field measurements would provide rigorous validation of UAS data and provide required knowledge base for widespread implementation of UAS-based data collection. The team will use the state-of-the-art manned/ unmanned ground platform (UGP) in collecting required ground-truthing information.

TECHNICAL OBJECTIVES

- Quantification of the growth and development of field crops as affected by soil, irrigation and crop management strategies

- Identification and differentiation of weed/plant species, patterns of infestation, and herbicide injury on crops

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Cameron Rogers, Ph.D student

- Zeke Bowden, B.S. student

Unmanned Aerial System (UAS) Data Collection at Brazos Bottom Farm, Phase I

Texas A&M; Engineering Experiment Station and Texas A&M; AgriLife Research

Principal Investigator

1 February 2015 – 31 October 2015

Total award $139,484

State Constrained Adaptive Flight Control, Phase I

Air Force Research Laboratory, Air Vehicles Directorate

Principal Investigator and Technical Lead

1 September 2015 – 31 December 2015

Total award $22,000

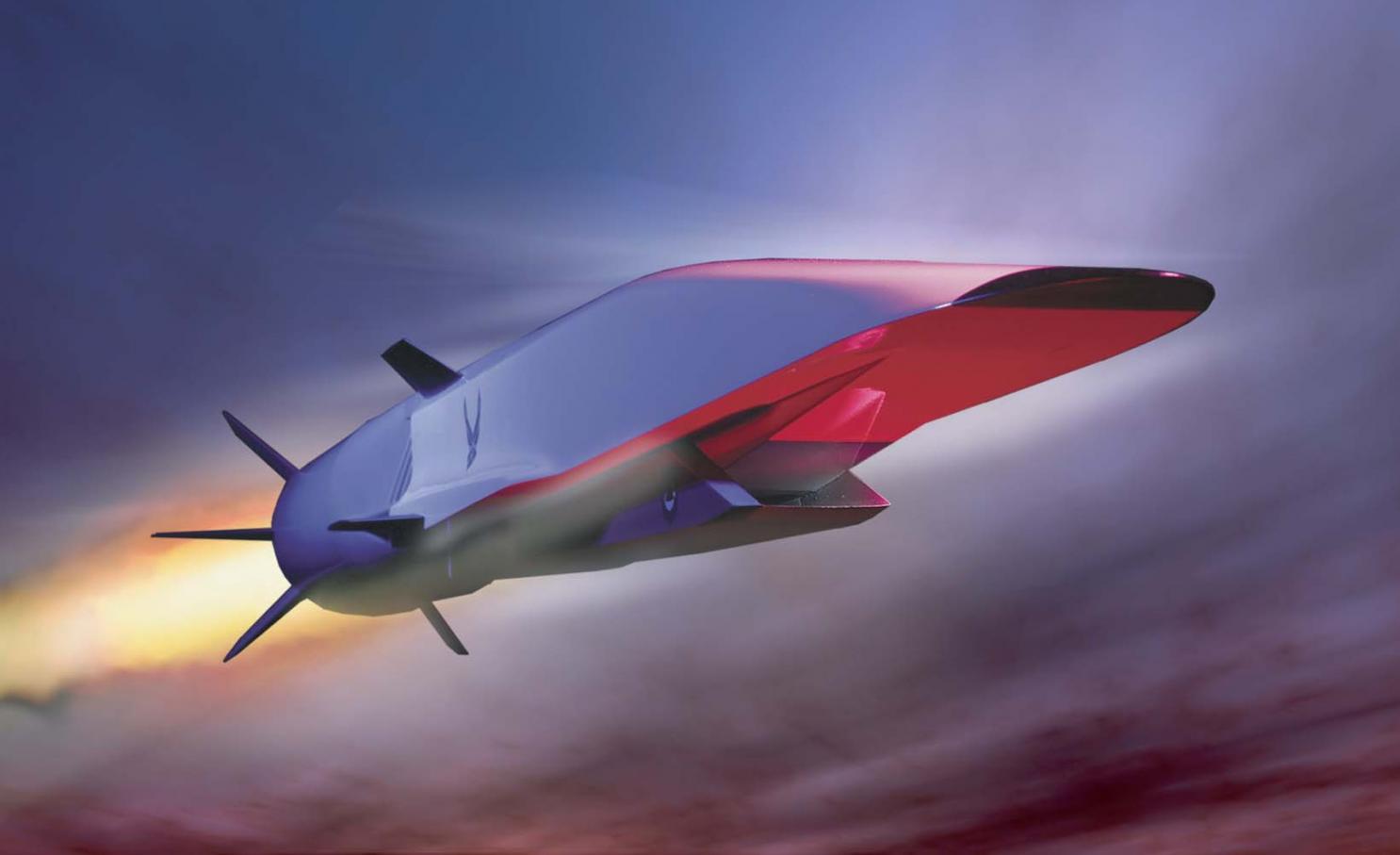

Because of the widely varying flight conditions in which hypersonic vehicles operate and certain aspects unique to hypersonic flight, the development of control architectures for these vehicles presents a challenge. One particular safety concern in hypersonic flight is inlet unstarts, which not only produce a significant decrease in the thrust but also can lead to loss of control and possibly the loss of the vehicle. Previous work on control design for hypersonic vehicles often has involved linearized or simplified nonlinear dynamical models of the aircraft, but a better approach is a nonlinear adaptive dynamic inversion control architecture with a control allocation scheme. This approach was shown to handle time delays, perturbations in stability derivatives, and reduced control surface effectiveness while maintaining tracking performance. The technical objective of this effort is to extend the previous work and develop state-constraint enforcement methods for an adaptive nonlinear dynamic inversion architecture. State constraint enforcement methods are necessary for all classes of aircraft, but are important for hypersonic aircraft. When the engine is operating in what is called “dual-mode,” the isolator is susceptible to over-pressure due to combustion, which can result in an inlet unstart. At other points in the flight envelope, an inlet unstart can occur when certain angle-of-attack or sideslip limits are violated. Initial work into enforcement of envelope constraints has successfully been done in the context of an adaptive dynamic inversion control law that assumes full-state feedback.

TECHNICAL OBJECTIVES

- Develop the formal theory for enforcement of both state and input constraints (position and rate limits) while maintaining stability.

- Address an extension of state-constraint enforcement in an output-feedback adaptive dynamic inversion architecture. In this case, the state that must be constrained may not be directly measurable and therefore have to be estimated.

Working with me on this program are Research Assistants:

- Douglas Famularo, Ph.D student

- Sean Whitney, B.S. student

Characterization of Derived Angle-of-Attack and Flight Path Angle Algorithms For General Aviation, Platforms, Phase I

Federal Aviation Administration

Principal Investigator and Technical Lead

1 August 2015 – 30 June 2016

Total award $63,679

This project seeks to exploit derived angle-of-attack (AOA, ##\alpha##) and flightpath angles (##\gamma##) from low cost Attitude Heading Reference System (AHRS) COTS systems found in GA aircraft. The feasibility of derived AOA will be evaluated for use cases of displays, envelope protection, and fly-by-wire flight control systems. It is expected that the results of this work will be a) recommended minimum performance standards for the algorithm and AHRS device, and b) the criteria for each use case when using AHRS that can be codified into a standard or a circular. The aircraft considered will be Part 23 aircraft (such as C-172, Cirrus SR-22, Lancair 350 and light jets). In addition, hybrid aircraft (Part 23 and part 27) may be considered. The COTS AHRS to be evaluated are those typically found in aircraft and will include 3 systems, 1 of each from the following categories. 1) Installed AHRS (such as Garmin, Aspen, Avidyne) 2) Portable AHRS (such as iLevil, Stratus, Sagetech) 3)Low Cost AHRS typically found on UAV’s (such as Pixhawk, Airware). Phase I will consist of an offline simulation study in the context of intended function, and benchmarks of existing sensors. The simulation engine which will generate inputs to the algorithms is XPlane 10, used in the VSCL Engineering Flight Simulator. The initial use will be an AHRS modeled as a fully integrated input-output sensor system.

TECHNICAL OBJECTIVES

The proposed work seeks to understand how the various COTS AHRS component characteristics affect the usability of derived AOA solutions by conducting a simulation study which will investigate:

- Sensor Accuracy

- Dynamic response

- Error analysis

- Sensitivity of derived AOA from equations

- Parameters

- GPS update rate

- Vertical airmass motion (steady-state and gust)

Working with me on this program are Research Assistants:

- Madison Treat, M.S. student

Infrastructure Assessment Using Unmanned Air Systems (UAS) and Video Analytics, Phase I

Texas A&M; Transportation Institute

Principal Investigator and Technical Lead

1 July 2015 – 31 September 2015

Total award $140,165

It is imperative that infrastructure is properly maintained in order to accommodate the demand of today’s modern society. Information corridors, transportation corridors and pipelines are intrinsically a part of the modern society as many daily functions depend wholly and completely on their use. The need is to conduct assessments of physical infrastructure such as railroads, bridges, roads, pipelines, refineries, from a specified height above the ground. The assessments consist of structural integrity, wear, and safety inspections. The assessments are traditionally conducted by an on the ground mobile team with all equipment being field portable unit or units. However, many of the assessment items are sited in remote areas, areas difficult to access from the ground, or possibly located in hazardous areas. Therefore companies are deploying specialized teams and helicopters or short distance UAS’s to capture images of the infrastructure elements. Sensors that operate in both the infrared (IR) and visible spectrums are typically used. Additional sensors such as Laser Interferometry Detection and Ranging (LIDAR) offer an attractive capability to image an assessment item in three dimensions with defects or damage precisely located on the item, and should be considered. The Center for Autonomous Vehicles and Sensor Systems (CANVASS) is conducting an effort that will culminate in a technology demonstration of a UAS system for infrastructure assessment. All applicable Federal Aviation Regulations (FAR) will be adhered to so that the system can be operated within domestic airspace. The demonstration will be conducted in realistic outdoor laboratory environments and controlled condition field environments. The effort will focus on integrated multi-spectral sensor (optical, infrared) and video analytics, for the purpose of demonstrating the technology and its benefits. Two preferred systems are being used for all sensor integration and infrastructure elements testing. One is a fixed-wing UAS with a multi-spectral sensor for larger area coverage. This is the CANVASS owned Anaconda UAS, a proven UAS that possess the payload capacity, flight performance (45 minute endurance), and rough field takeoff and landing capability for the proposed work. The second UAS is an octocopter that will also carry the multi-spectral sensor for close-in imaging. This vehicle is the Spread Wings S1000+ professional quality octocopter. The S1000+ weighs just 4.4kg and has a maximum takeoff weight of 11kg. It can easily carry the Multispectral camera plus future cameras and payloads. Used with a 6S 15000mAh battery, it can fly for up to 15 minutes. The UAS systems will be validated, verified, and tested in a series of five flights that will be conducted at the Riverside Range, TAMU Riverside Campus. The Riverside Range is part of the FAA Lone Star UAS Center. The purpose is to verify basic system operation, and collect data on test items of interest at known locations on the test range.

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Frank Arthurs, Ph.D student

- Dipanjan Saha, Ph.D. student

- Joshua Harris, Ph.D. student

- Zeke Bowden, B.S. student

- Tyler Block, B.S. student

- Robert Clever, B.S. student

- Alexx Cisotto, B.S. student

Unmanned Vehicle Autonomous Algorithms

Northrop Grumman Corporation

Unrestricted Research Gift

1 February 2015

Total award $60,000

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Joshua Harris, Ph.D. student

Flight Testing of Universal Access Transceiver Datalink on Unmanned Air System

FreeFlight Systems

Co- Principal Investigator and Technical Lead

1 September 2014 – 31 August 2015

Total award $5,000

The Universal Access Transceiver (UAT) ADS-B is a cooperative surveillance technology in which an aircraft determines its position via satellite navigation and periodically broadcasts it, enabling it to be tracked. The information can be received by air traffic control ground stations as a replacement for secondary radar. It can also be received by other aircraft to provide situational awareness and allow self separation. ADS-B is the first core technology within NextGen, the ongoing program to increase the efficiency, capacity and safety of the world’s airspace systems. ADS-B is an Air Traffic Management (ATM) Surveillance system that will replace traditional radar-based systems. It provides greater accuracy and wider coverage to safely allow reduced separation, more efficient routing and other benefits. The Vehicle Systems and Control Laboratory (VSCL) will conduct test and evaluation of the RANGR ADS-B System, to consist of flight tests to collect data and assess suitability of the UAT for use in NextGen airspace and operations.

TECHNICAL OBJECTIVES

- Define image/resolution requirements for a specific precision agriculture application

- Integrate observation/surveillance pod provided by Intuitive Machines into the Pegasus II UAS

- Conduct flight testing of the observation at Texas A&M University’s Riverside Range

- Review and interpret observation data to evaluate effectiveness of sensor pod

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Douglas Famularo, Ph.D student

- Frank Arthurs, Ph.D student

- Dipanjan Saha, Ph.D. student

- Joshua Harris, Ph.D. student

- Samantha Hansen, B.S. student

Unmanned Flight Test of Observation Pod for Precision Agriculture Application

Intuitive Machines

24 March 2014 – 3 September 2014

Co-P.I. Dr. Dale Cope

Co-P.I. Dr. Alex Thomasson

Total award $39,150

As part of its development of integrated sensor systems for UAS platforms, Intuitive Machines requires flight testing of its sensor system for a specific application, such as agriculture, and an independent engineering assessment of system’s capability to provide the desired results. This project will conduct flight testing of an integrated sensor system for an agriculture application followed by an engineering assessment of the system’s capabilities. Intuitive Machines is providing the sensor system, and agricultural and aerospace researchers from Texas A&M University are teaming to conduct the flight testing and engineering assessment. Texas A&M University researchers will define the requirements of the sensor system for an agriculture application, integrate the sensor system into the Pegasus II UAS platform, conduct flight tests over a suitable agriculture field, and then, assess the system’s capabilities to meet the specified requirements.

TECHNICAL OBJECTIVES

- Define image/resolution requirements for a specific precision agriculture application

- Integrate observation/surveillance pod provided by Intuitive Machines into the Pegasus II UAS

- Conduct flight testing of the observation at Texas A&M University’s Riverside Range

- Review and interpret observation data to evaluate effectiveness of sensor pod

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Frank Arthurs, Ph.D student

- Dipanjan Saha, Ph.D. student

- Joshua Harris, Ph.D. student

- Samantha Hansen, B.S. student

Weather Technology in the Cockpit (WTIC): General Aviation Weather Alerting

Federal Aviation Administration

1 January 2014 – 31 December 2014

Co-P.I. Dr. Thomas Ferris

Total award $333,890

The goal of the Weather Technology in the Cockpit (WTIC) General Aviation Weather Alerting is to assess the feasibility to develop agile, low latency cockpit weather alerts to identify hazardous weather with minimal pilot analysis. Results from this research will be increased understanding of the impacts of low latency, alert interface and assimilation factors on GA pilot decision making, uncertainty, and safety.

The investigation team combines cockpit displays and interfaces, aviation meteorology, aviation safety, human factors engineering, and pilot training expertise. The team will be led by Dr. John Valasek of Texas A&M; University (TAMU), who will serve as Principle Investigator, and Co-Principle Investigator Dr. Thomas Ferris at TAMU. Professor Lori Brown of Western Michigan University (WMU) will lead the efforts at WMU, working with Meteorology Professor Dr. Geoff Whitehurst of WMU, and Dr. William G. Rantz at WMU. Graduate and undergraduate students at each institution will assist the investigators.

TECHNICAL OBJECTIVES

- Increase understanding of the impacts of low latency, weather alerting function interface and assimilation factors on general aviation (GA) pilot decision making, uncertainty, and safety.

- Assess feasibility of agile, low latency cockpit weather alert functions to support pilots in identifying hazardous weather with minimal analytical effort.

- Find beneficial use cases for low latency weather alert functions by identifying high priority scenarios, and consult WTIC program office for verification of these scenarios.

- Perform trade studies on alert implementations and design/develop a prototype.

- Detail whether each suggested alert function is intended for strategic or tactical situations/use cases/scenarios, or both.

- Verify alert prototype benefits.

The project has two 12-month phases. The broad objective of Phase I is to assess the feasibility of agile, low latency cockpit weather alerting functions that require minimal engineering and pilot analysis. Phase I will serve to gather evidence to continue to the full Phase II study. Phase II will consist of flight evaluations which lead to recommendations for practice. Each phase is detailed below.Phase I is a study to gather preliminary data to determine alert functions that may be more effective for disseminating weather information to GA pilots compared to traditional graphical representations. It will determine the feasibility (via trade study) of implementing selected alert functions for certain architectures. Under Phase I, a thorough literature review will be conducted to identify any similar recent studies, and identify existing and potential alert functions to explore further from the results of the analysis. The focus will be on meteorological (MET) information gaps and shortfalls that contribute to the safety risk that may be mitigated through the use of a more effective weather alert function. This phase will identify which weather alert functions are currently implemented by the GA population to determine which new alerts may have the potential to reduce accidents. The data will be used to find beneficial use cases for low latency weather alert functions by identifying high priority scenarios where an alert would be helpful (icing turbulence, VMC to IMC, thunderstorm, etc.). Five relevant scenarios will be generated and then validated with cost effective low-fidelity simulation evaluations, including Human Factors analyses. This will lead to a more extensive flight training device assessment using low, medium, and high time pilots in Phase II. The results of Phase I will include recommendations on what the specific alert function(s) should entail / encompass for the validation and verification flight testing program in Phase II.

Phase II will utilize high fidelity flight training devices for evaluations and to examine specific flight outcomes and potential human factors issues within a large sampling size of GA pilots.

Working with me on this program are Research Assistants:

- James Henrickson, M.S. student

- Joshua Harris, B.S. student