Texas A&M; Engineering Experiment Station and Texas A&M; AgriLife Research

Principal Investigator

1 January 2016 – 31 October 2016

Total award $106,000

Research

Our research is focused on bridging the scientific gaps between traditional computer science topics and aerospace engineering topics, while achieving a high degree of closure between theory and experiment. We focus on machine learning and multi-agent systems, intelligent autonomous control, nonlinear control theory, vision based navigation systems, fault tolerant adaptive control, and cockpit systems and displays. What sets our work apart is a unique systems approach and an ability to seamlessly integrate different disciplines such as dynamics & control, artificial intelligence, and bio-inspiration. Our body of work integrates these disciplines, creating a lasting impact on technical communities from smart materials to General Aviation flight safety to Unmanned Air Systems (UAS) to guidance, navigation & control theory. Our research has been funded by AFOSR, ARO, ONR, AFRL, ARL, AFC, NSF, NASA, FAA, and industry.

Autonomous and Nonlinear Control of Cyber-Physical Air, Space and Ground Systems

Vision Based Sensors and Navigation Systems

Cybersecurity for Air and Space Vehicles

Air Vehicle Control and Management

Space Vehicle Control and Management

Advanced Cockpit/UAS Systems and Displays

Control of Bio-Nano Materials and Structures

Precision Agriculture

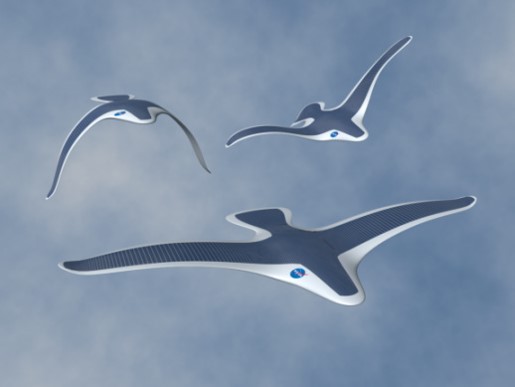

Utilization of Unmanned Aerial Systems (UAS) for Crop Production and Weed Management Applications

Texas A&M; AgriLife Research and Cropping Systems Program

Co-Principal Investigator

1 September 2015 – 31 August 2017

Total award $240,000

The overall goal of this project is to generate preliminary data necessary for effective utilization of UAS-based imaging techniques for crop production and weed management applications. UAS can be equipped to include multi-spectral sensors (3 to 4 bands in the visible and near-infrared/NIR range), hyperspectral sensors (Headwall’s Standard Micro-Hyperspec VNIR 380-1000 nm spectral range), thermal sensors and LIDAR (Light Detection And Ranging), among others. These sensors have a multitude of applications, in areas such as soil, crop, water and weed management in agriculture. However, interpretation of data collected using UAS-based remotely sensed images requires careful consideration of several factors. What is not known is the error estimates between UAS and ground-level data. Such knowledge is key to validate the utility of UAS-based data collection for various applications in agriculture. One of the major limitations so far is the labor-intensive ground data collection and biomass sampling, which will be addressed in this research. We believe that layering UAS data with field measurements would provide rigorous validation of UAS data and provide required knowledge base for widespread implementation of UAS-based data collection. The team will use the state-of-the-art manned/ unmanned ground platform (UGP) in collecting required ground-truthing information.

TECHNICAL OBJECTIVES

- Quantification of the growth and development of field crops as affected by soil, irrigation and crop management strategies

- Identification and differentiation of weed/plant species, patterns of infestation, and herbicide injury on crops

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Cameron Rogers, Ph.D student

- Zeke Bowden, B.S. student

Unmanned Aerial System (UAS) Data Collection at Brazos Bottom Farm, Phase I

Texas A&M; Engineering Experiment Station and Texas A&M; AgriLife Research

Principal Investigator

1 February 2015 – 31 October 2015

Total award $139,484

Unmanned Flight Test of Observation Pod for Precision Agriculture Application

Intuitive Machines

24 March 2014 – 3 September 2014

Co-P.I. Dr. Dale Cope

Co-P.I. Dr. Alex Thomasson

Total award $39,150

As part of its development of integrated sensor systems for UAS platforms, Intuitive Machines requires flight testing of its sensor system for a specific application, such as agriculture, and an independent engineering assessment of system’s capability to provide the desired results. This project will conduct flight testing of an integrated sensor system for an agriculture application followed by an engineering assessment of the system’s capabilities. Intuitive Machines is providing the sensor system, and agricultural and aerospace researchers from Texas A&M University are teaming to conduct the flight testing and engineering assessment. Texas A&M University researchers will define the requirements of the sensor system for an agriculture application, integrate the sensor system into the Pegasus II UAS platform, conduct flight tests over a suitable agriculture field, and then, assess the system’s capabilities to meet the specified requirements.

TECHNICAL OBJECTIVES

- Define image/resolution requirements for a specific precision agriculture application

- Integrate observation/surveillance pod provided by Intuitive Machines into the Pegasus II UAS

- Conduct flight testing of the observation at Texas A&M University’s Riverside Range

- Review and interpret observation data to evaluate effectiveness of sensor pod

Working with me on this program are Research Assistants:

- James Henrickson, Ph.D student

- Frank Arthurs, Ph.D student

- Dipanjan Saha, Ph.D. student

- Joshua Harris, Ph.D. student

- Samantha Hansen, B.S. student