Raytheon Company, Intelligence and Information Systems

1 August 2010 – 31 January 2011

Total award $45,000

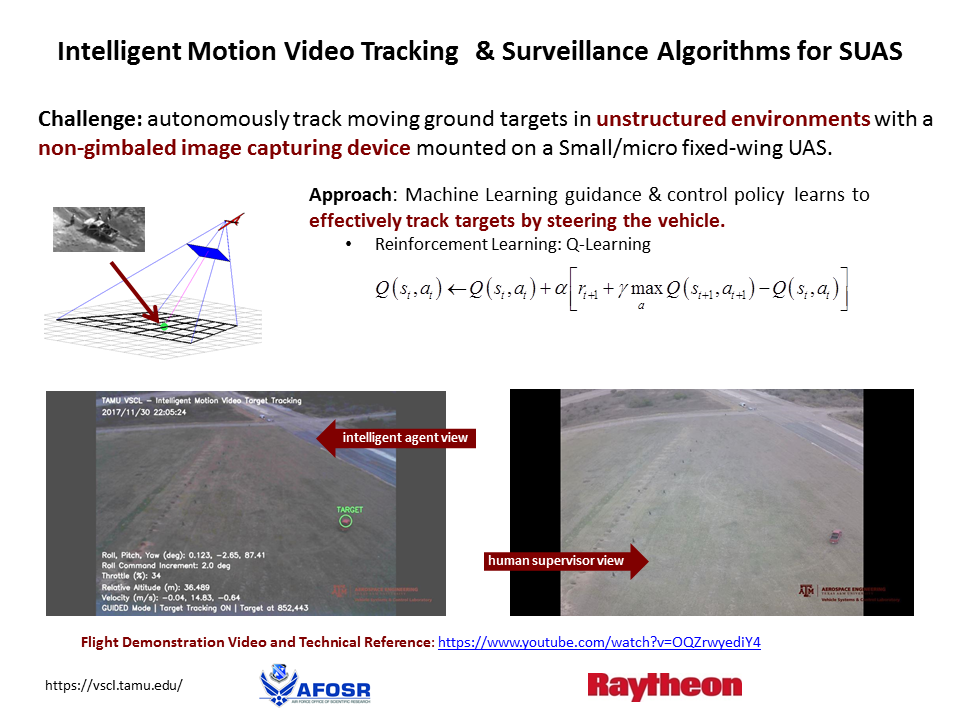

Advances in unmanned flight have led to the development of Unmanned Air Systems (UASs) that are capable of carrying state-of-the-art video capturing systems for the intended purpose of surveillance and tracking. UASs have the capability to fly through a target area with a mounted camera and allow humans to operate both the UAS and the camera to attempt to survey any objects that are deemed targets. These systems have worked well when controlled by humans, but having them operate autonomously is more challenging.

One way to introduce the concept of autonomy to this problem is to determine a control policy that is capable of controlling the UAS autonomously along a certain trajectory while having the camera controlled by a human. Another way is to do the opposite, and have the UAS flown manually while the camera gimbals to capture and track identified targets. Both of these methods have been explored before and have merit, but having both the UAS and the camera operated autonomously could provide greater flight and tracking efficiency. Having a system that is capable of controlling a UAS and camera system to keep a selected target visible in the camera screen would free the human supervisor to focus on selecting viable targets and analyzing the images received.

The biggest challenge stems from the need to determine an optimal control policy for keeping the target in the middle of the image. Conventional control techniques require determining an appropriate cost function and then finding the weights that make the control optimal. Although finding the optimal control is often straightforward, determining the cost function that best describes the problem is not straightforward. For this research, Reinforcement Learning (RL) is utilized for the determination of the optimal control policy that will both gimbal the camera and steer the UAS to provide target tracking.

The specific RL algorithm used is Q-Learning with Adaptive Action Grid (AAG), developed by Lampton and Valasek as a means to provide greater accuracy in reaching the goal state (i.e., the target), while also decreasing the size (dimensions) of the state-space to be considered. This dramatically decreases the total number of states in the system, so that the learning time becomes more feasible and the storage requirements more tractable. The objective of the approach is to bring any target located in an image captured by a camera into the center of the image, using the AAG learned control policy described above. The learning agent will determine offline (initially) how to control the UAS and camera to get a target from any point in the image to the center and hold it there. A feature of this approach is that the learning agent will continue to learn and refine and update the previously offline learned control policy, during actual operation.

Working with me on this program are Graduate Research Assistants:

- Kenton Kirkpatrick, Ph.D. student

- Jim May, M.S. student