Phase I: Vehicle modeling, simulation development, and preliminary control law synthesis

NASA Johnson Space Center

1 August 2008 – 31 July 2009

Total award $129,569

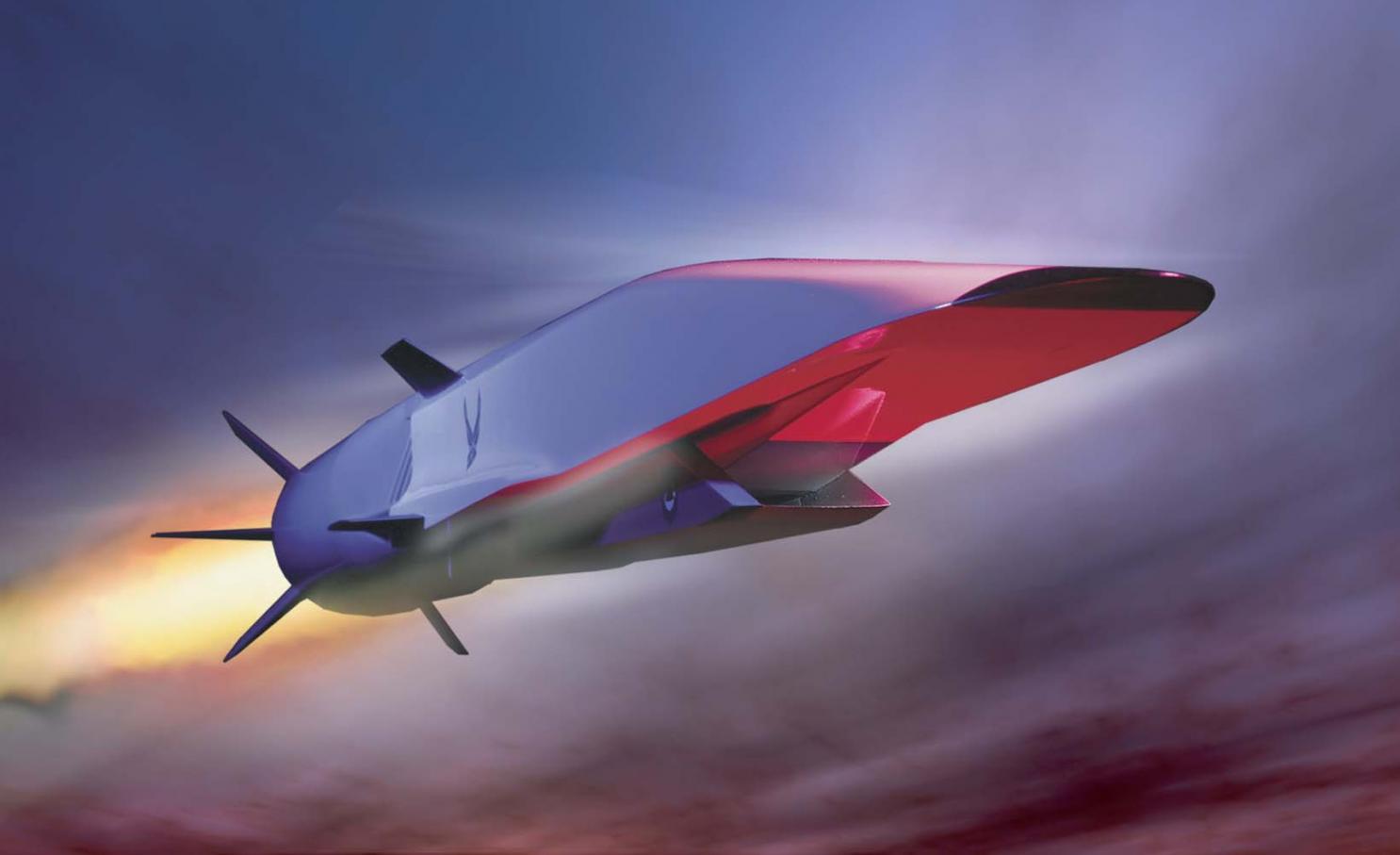

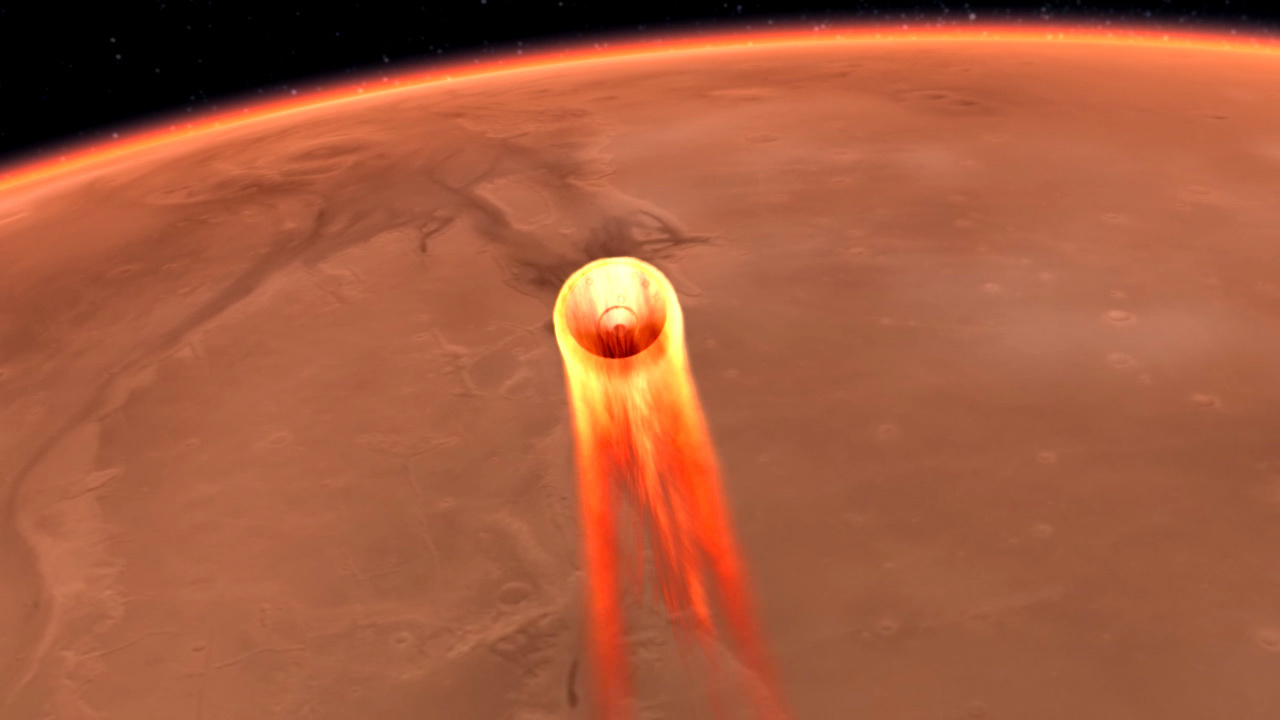

The next generation of vehicles that will take humans to the moon or Mars must be much more reliable and safer than both the manned (Space Shuttle Orbiter) and unmanned (e.g., Cassini, Mars Rover) vehicles that are currently being used. Fault tolerant control systems that autonomously adapt and safely and predictably recover from various equipment and system failures will be absolutely necessary. The Orion Crew Exploration Vehicle (CEV) program requires automated capability for numerous Guidance Navigation, and Control (GN&C;) functions during the ascent phase of flight, particularly the automated execution of ascent abort scenarios. It is also a significant and challenging control problem because of elastic body modes, environmental uncertainties, possible control faults, and the need to be highly adaptable to possible mission aborts.

This program seeks to conduct applied research to support current and future NASA goals in the area of launch vehicles (near term) and landers (intermediate term) by investigating and developing new approaches for the fault and abort tolerant ascent control of launch vehicles. The focus will be on novel and non-traditional control methods which have the potential to significantly improve current levels of safety in manned launch vehicles. Specifically, during the theory and algorithm development stage of this research, we will investigate ways to combine intelligent control techniques with adaptive control systems. This will enable the handling of time-varying parameters and environmental disturbances, while also providing a decision support function and the capability to learn and handle abort scenarios.

Adaptive-Reinforcement Learning Control (A-RLC), an intelligent autonomous control methodology developed by the Vehicle Systems & Control Laboratory at A&M University and previously used for the control of aircraft and planetary entry vehicles, will be extended and tailored for the ascent phase of launch vehicles. A-RLC can make use of a variety of Machine Learning techniques, and determining the most efficient, implementable, and verifiable one will be a major task of the proposed work.

One goal of the research will be to investigate and quantify the benefits / tradeoffs of using alternative and non-traditional approaches to fault tolerance and handling aborts, by determining the most efficient, implementable, and verifiable set from the following candidate list:

- Intelligent Learning and Control

- Intelligent Learning and Control

- Machine Learning

- Reinforcement Learning

- Neural Networks

- Fuzzy Logic

- Advanced Learning / Function Approximation Techniques

The approach taken for the proposed research will be to develop hierarchical, combined nonlinear Fault Tolerant Structured Adaptive Model Inversion Control (SAMI) with Adaptive – Reinforcement Learning Control (A-RLC), tailored specifically to launch vehicles. In this scheme, Fault Tolerant SAMI provides the fault tolerance capability. It is ideally suited to this application because of its flexibility for a variety of system types, and because a fault detection scheme is not required. The launch abort handling capability will be provided by A-RLC, which will learn how to safely and effectively handle non-nominal situations.

Working with me on this program are Graduate Research Assistants:

- Monika Marwaha, Ph.D. student

- Amanda Lampton, Ph.D student

- Anshu Narang, Ph.D student